Developer Alignment First, Productivity Later

A recent online debate on Developer Productivity focused on how to measure. But in large enterprise environments, there is a bigger opportunity around Developer Alignment.

Hi Everyone,

I am resuming this newsletter after a lengthy hiatus. The hiatus was to finish writing a book, Becoming a Software Company, which is now available everywhere books are sold. It outlines principles of building good software for enterprise companies to accelerate their journey to think and operate like a software company.

Going forward, this substack will focus on curating insights and ideas to unlock enterprise business innovation in the software era.

Developer Alignment First, Productivity Later

A McKinsey article triggered a useful conversation on developer productivity. The article claimed that software developer productivity can be measured. It was titled “Yes, You can Measure Software Developer Productivity”.

With a title like that, one would expect it to have robust ideas for measuring developer productivity.

Sadly, it fell short.

Not unexpectedly, a couple of influential voices (Kent Beck and Gergely Orosz) from the software world published a passionate 2-part response to the McKinsey article. Kent is one of the 17 authors of the Agile Manifesto and is revered as the inventor of Extreme Programming methodology. Gergely writes the best software engineering newsletter on Substack and is a leading voice on Developer Experience.

The Beck-Orosz response to McKinsey's article centers on its metrics fixation. Metrics create incentives that change behaviors, which can lead to unintended consequences. Doesn’t matter which domain—when people fixate on metric scores, they start to ignore real things that may matter. McKinsey cited sales as one of the business functions that is well-measured as compared to software development. However, they didn’t discuss the unintended behaviors that result from metrics-oriented sales management, e.g., sandbagging and overpromising to meet the quotas.

Metrics become ineffective over time because they get gamed. Encapsulated as Goodhart’s law, this insight is not novel. Jerry Muller has written a terrific book on this topic, The Tyranny of Metrics, that cautions against quantifying and incentivizing human performance solely based on metrics.

Metrics must be implemented carefully by thoroughly evaluating their intended impact and outcomes. Not surprisingly, that is what Beck-Orosz's response advocates. (Beck Version: Part 1, Part 2; Orosz Version: Part 1, Part 2)

There are no easy and facile answers for measuring developer productivity. There are a lot of tradeoffs, and even though executives and senior leaders may prefer it that way, software development can’t be measured and tracked like typical factory work. For knowledge work, which software development is, productivity can only be measured in terms of business outcomes and impact.

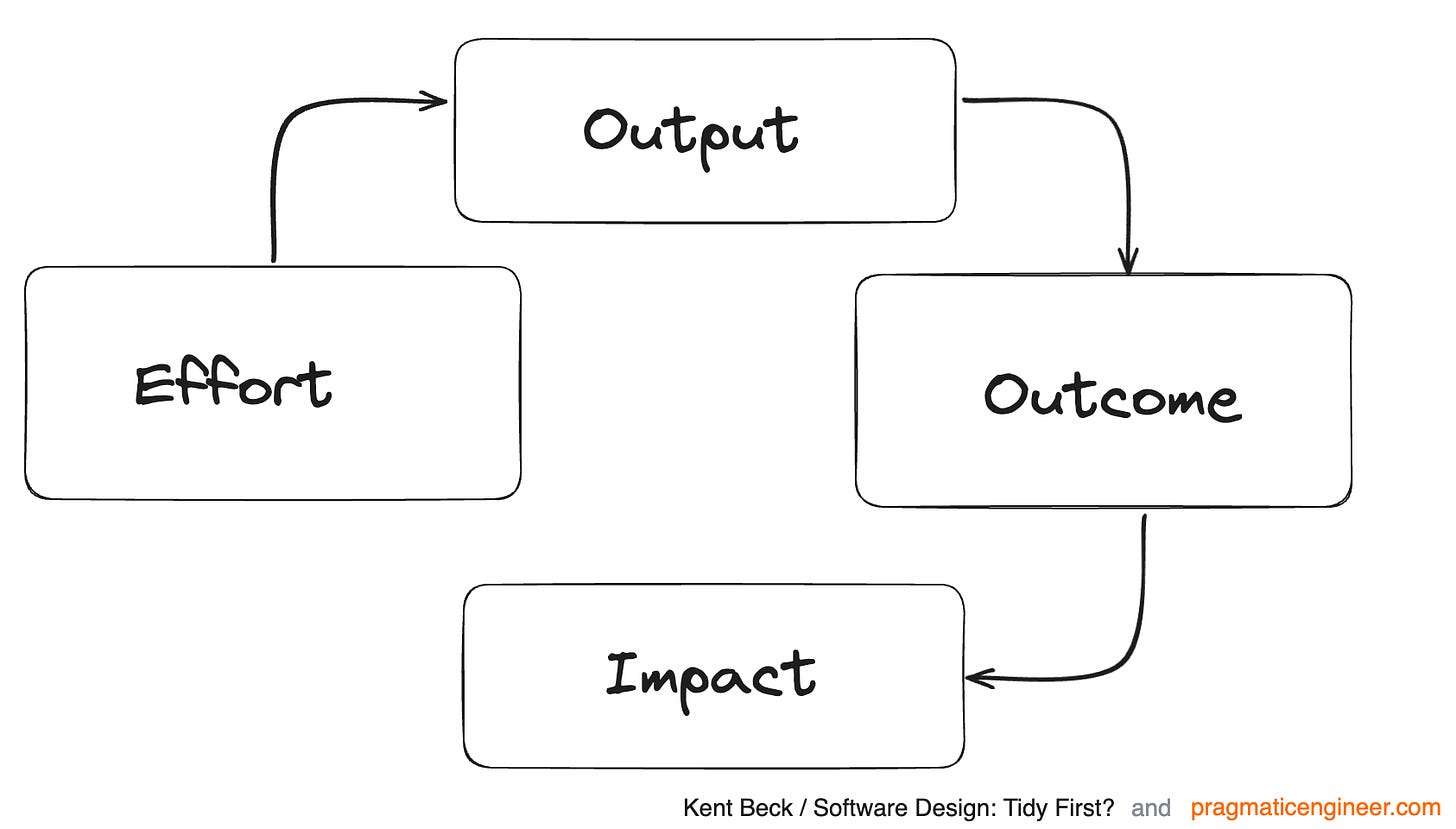

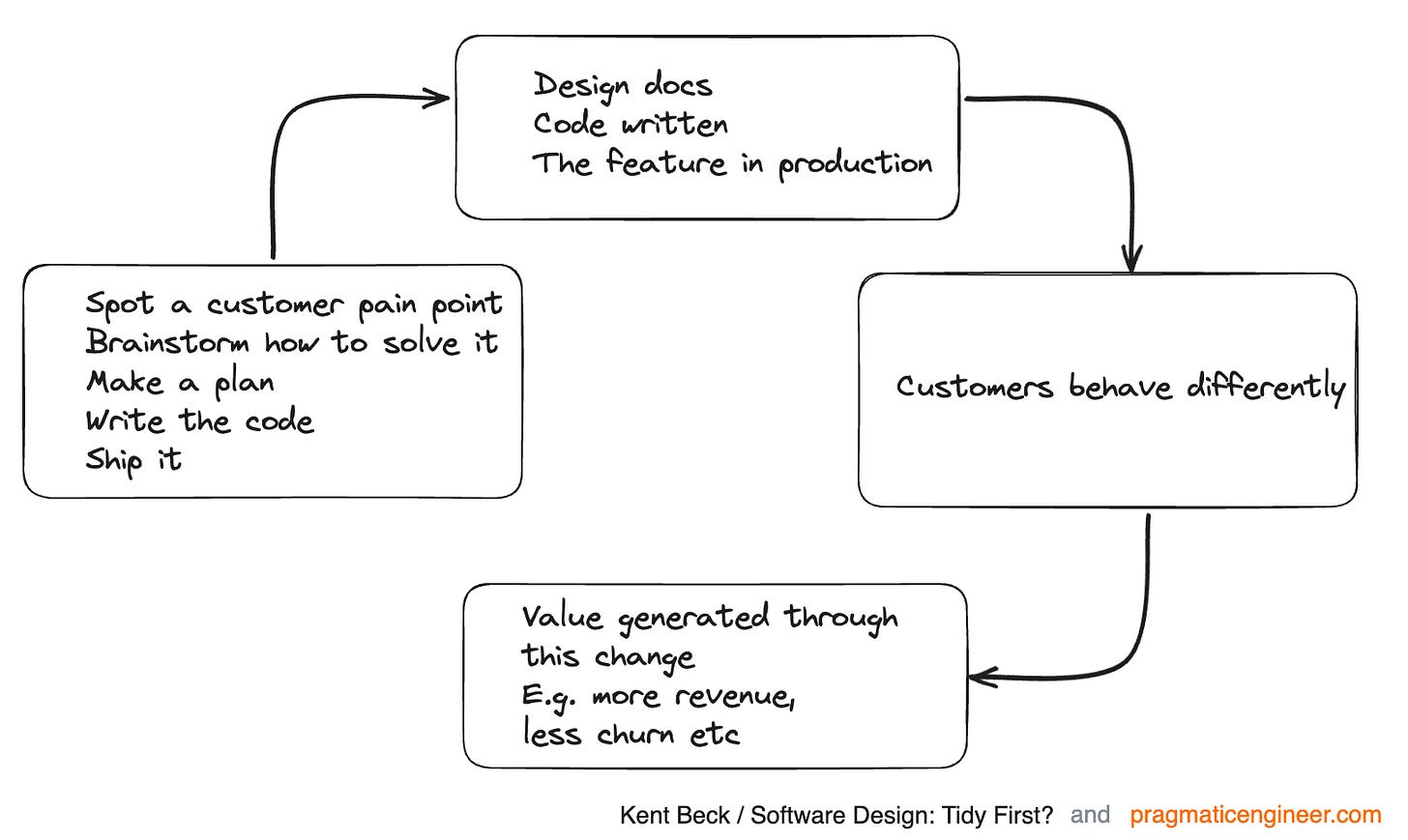

The Beck-Orosz proposal relies on an effort-output-outcome-impact model to understand software development as a measurement domain. Their prescription is that any open measurement has to prioritize measuring outcome-and-impact over just tracking effort-and-output.

That is the area I want to hone in on—how much the developers and the teams understand their development effort's intended business impact.

In large enterprise environments, which are the target audiences for the McKinsey article, there is often a missing link between effort-and-output and outcome-and-impact. It’s because the people who think about outcome-and-impact don’t collaborate closely with those who produce effort-and-output. This lack of collaboration is often referred to as the business-IT divide.

The business provides the requirements that IT has to deliver. The delivery work is done in a low-trust environment due to the political power structure across the business and the IT. That means the communications must be more explicit—elaborate reports and documentation reviewed in endless cycles of status meetings that take a toll on productivity.

More often than not, external teams of contractors and consultants deliver the work to varying degrees. These teams aren’t incentivized to think about the outcome-and-impact dimension either. Their incentive is to deliver on the contracted statements of work (SoWs).

In such environments, the bigger opportunity is in aligning the effort-and-output dimension with the outcome-and-impact dimension. Productivity measurements won’t work until the measured development effort is traceable to the business impact. Therefore, the management's responsibility is to create that alignment before obsessing about productivity. Also, the management has to design the structures so that the teams can seek clarity on the desired business impact when alignment is missing.

Ultimately, I feel the McKinsey article is what it is—a typically facile answer to position new consulting work with their target audience. And, even though the Beck-Orosz response was a thorough and balanced critique, it didn’t acknowledge the need for deeper alignment between business impact and development effort.

Outside Links

An Oracle ERP implementation may land the Birmingham City Council in bankruptcy.

There was a four-fold increase in initial estimated expenses from £20 million to around £100 million. Just wow!

Non-software companies like BCC don’t grasp the complexity of developing large software-intensive systems. These types of projects are challenging, and initial estimates are never right. Enforcing contracts based on these estimates is counterproductive. It is better to tackle the project in small batches, each aiming to mitigate some aspect of the complexity.

Another mistake BCC made was translating existing ways of doing things into newer technology. They should have understood the amount of bloat in the existing system first. To improve systems, you must remove things before starting to add. And the new platform must enable new things that weren't possible before. Otherwise, why bother?

This is a classic case of a big "T" transformation gone awry. (Link)

Salesforce calls its Dreamforce conference “the world’s largest AI event” of 2023

No, it wasn’t. I stepped into the conference for a day to meet a couple of colleagues for lunch. The conference had its usual vibe—thousands of people walking from session to session around San Francisco’s Moscone Center wearing blazers, slacks, blue lanyards, and sneakers with hungover expressions.

The branding, however, is a sign that AI sweepstakes have arrived in the world of enterprise software. The sell argument is also fairly trite by now: AI is progressing fast; it could be dangerous if not done right; trust us to figure it out for you.

But amidst all this hype and noise, a discussion on enterprise AI use cases is conspicuous in its absence. (Link)

The Pentagon withholds Lockheed’s fees ($8 million per plane) for delayed software integration testing.

As per a Bloomberg report, the estimated cost of the TR-3 (Tech Refresh-3) software upgrade has doubled from the initial estimate of $712 million. Lockheed isn’t expected to make any profit on its contract.

The report projects the cost of the upgraded software at $70-75 million per plane.

Unless each F-35 has some bespoke custom hardware that necessitates heavy customization of TR-3 software, these per-plane numbers make little sense. Also, the Pentagon’s per-plane payments reflect that their folks negotiating vendor contracts don’t understand how software gets built and deployed. (Link)

If you have any questions, suggestions, or feedback, please reply or email me at amarinder@amsidhu.com

If you enjoyed reading this, please share it with friends or colleagues. And feel free to send any ideas you find interesting to me!

Regards,

Amarinder